As smartphones have grown more sophisticated over the years, so have their accompanying security measures for identity verification. Simple passwords have been replaced by thumbprints and facial recognition. However, those methods do not solve the issue of notification privacy. With smartphones now used everywhere from public places to the plant floor — and often conveying private and confidential work information — privacy is becoming paramount.

For example, sharing your phone with a friend, family member or other individual — or even leaving it briefly on a nearby surface — could expose your privacy in the form of an incoming call, email, reminder or app notification. Existing iOS guided access and Android multi-account features have been tested to solve this problem but have been unsuccessful.

Louisiana State University computer science Assistant Professor Chen Wang believes he may have the answer. Wang is working with third-year Ph.D. student Long Huang on a gripping-hand identity verification method that ensures the correct user is holding the smartphone before displaying potentially sensitive content. Their recent paper on this topic was published at Mobicom 2021, the annual international conference on mobile computing and networking. A short demo can be viewed here.

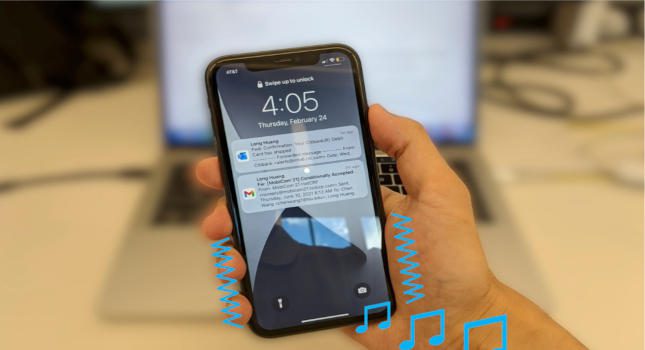

When a notification tone is played, the phone’s mic records the sound. An artificial intelligence (AI)-based algorithm processes the sound and extracts biometric features to match with the user’s feature profile, or recorded hand grip. If there is a match, the verification is successful, and the notification preview is displayed on the screen. Otherwise, only the number of notifications pending is shown.

“We consider this an attempt for security design to embrace art,” said Wang, whose expertise is in cybersecurity and privacy, mobile sensing and computing, and wireless communications, among other areas. “We find that when playing music with a phone, our holding hands often feel the beats, which are caused by the phone surface vibrations. This is a way in which the music sound conveys information to us. Because music sounds are signals, they can be absorbed/dampened, reflected, or refracted by our hands.

“We then use the phone’s own mic to capture the remaining sounds to see how we respond to music. Because people have different hand sizes, finger lengths, holding strengths and hand shapes, the impacts on sounds are different and can be learned and distinguished by AI. Along this way, we develop a system to use the notification tones to verify the gripping hand for notification privacy protection. This is very different from prior acoustic sensing works, which all rely on dedicated sounds, inaudible or annoying to human ears.”

The project is one of two supported by the Louisiana Board of Regents that Chen is working on involving smartphones and users’ hands. The other — in collaboration with second-year Ph.D. student Ruxin Wang and computer science master’s graduate Kailyn Maiden — uses the back of the user’s phone-gripping hand for identity verification at kiosks, such as those used to order food, print tickets and self-checkout at the grocery store. This research will be published as a late-breaking work at the 2022 ACM CHI Conference on Human Factors in Computing Systems.

“When a user holds [his or her] phone close to the kiosk for NFC-based or QR code authentication, the back of the user’s gripping hand is captured by a camera on the kiosk,” Wang said. “An AI-based method will process the gripping-hand image and compare it against the user’s registered hand image by checking the gripping hand’s shape, skin patterns/color and gripping gesture. Notice here, the user’s identity has been claimed by the traditional NFC or QR code methods as they transmit the user’s security token. Thus, here we provide a two-factor authentication to the kiosk — the security token and the gripping-hand geometry biometrics.”

Wang added that he and the students are improving the authentication systems and conducting user studies with more participants and device methods. They are also examining the impact factors on the practical use of these systems, including the ambient noise and light conditions. Additionally, they are investigating potential attacks, for example, a 3D-printed silicon fake hand and acoustic replay attacks.

Do you have experience and expertise with the topics mentioned in this article? You should consider contributing content to our CFE Media editorial team and getting the recognition you and your company deserve. Click here to start this process.